Organizations deploying AI must address legal risks and accountability frameworks. This Artificial Intelligence Implementation Liability Advisory Letter provides essential guidance for executives on managing algorithmic bias, data privacy, and regulatory compliance. Understanding professional liability ensures responsible innovation while mitigating potential litigation. To help you formalize your corporate policy, below are some ready to use template.

Letter Samples List

- Client Confidentiality and Artificial Intelligence Tool Liability Disclosure Letter

- Law Firm Generative Artificial Intelligence Malpractice Liability Warning Letter

- Legal Counsel Artificial Intelligence Risk and Liability Advisory Letter

- Artificial Intelligence Vendor Contract Liability Indemnification Letter

- Legal Practice Automated Decision-Making Liability Assessment Letter

- Attorney Ethics and Artificial Intelligence Implementation Liability Advisory Letter

- Law Firm Artificial Intelligence Data Breach Liability Notification Letter

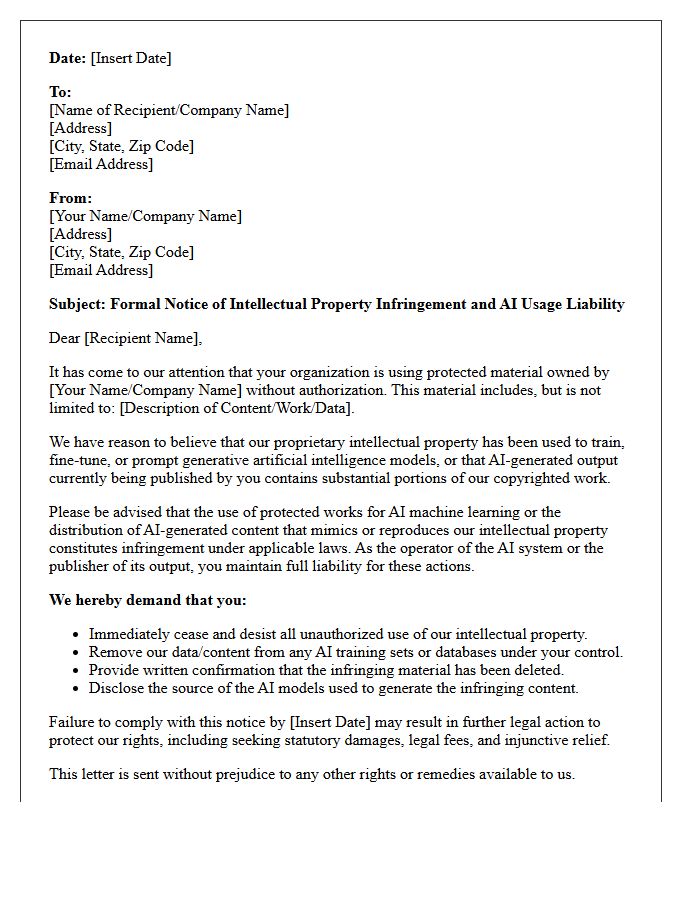

- Intellectual Property Infringement and Artificial Intelligence Usage Liability Letter

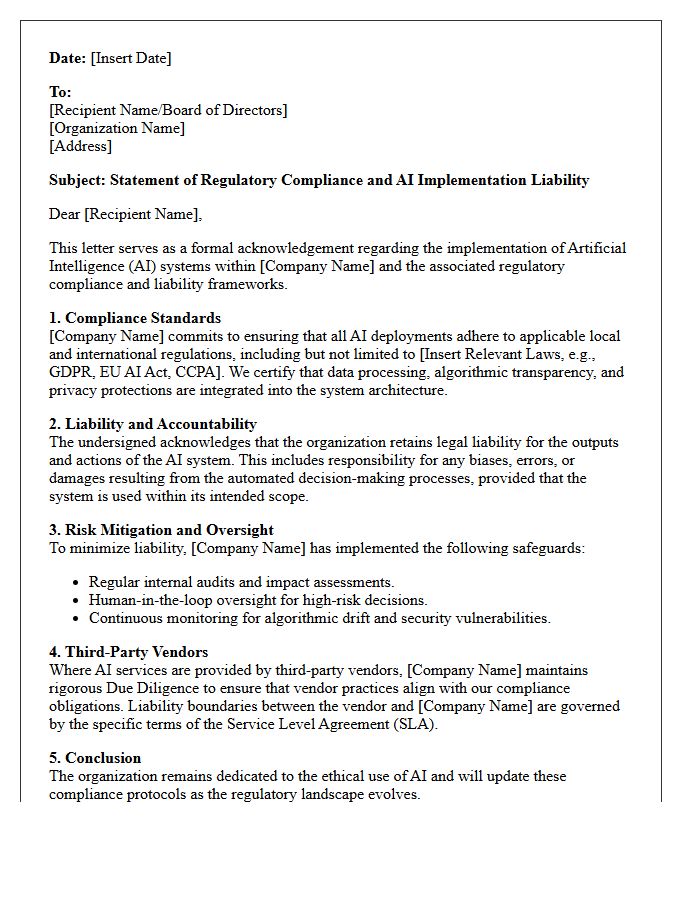

- Regulatory Compliance and Artificial Intelligence Implementation Liability Letter

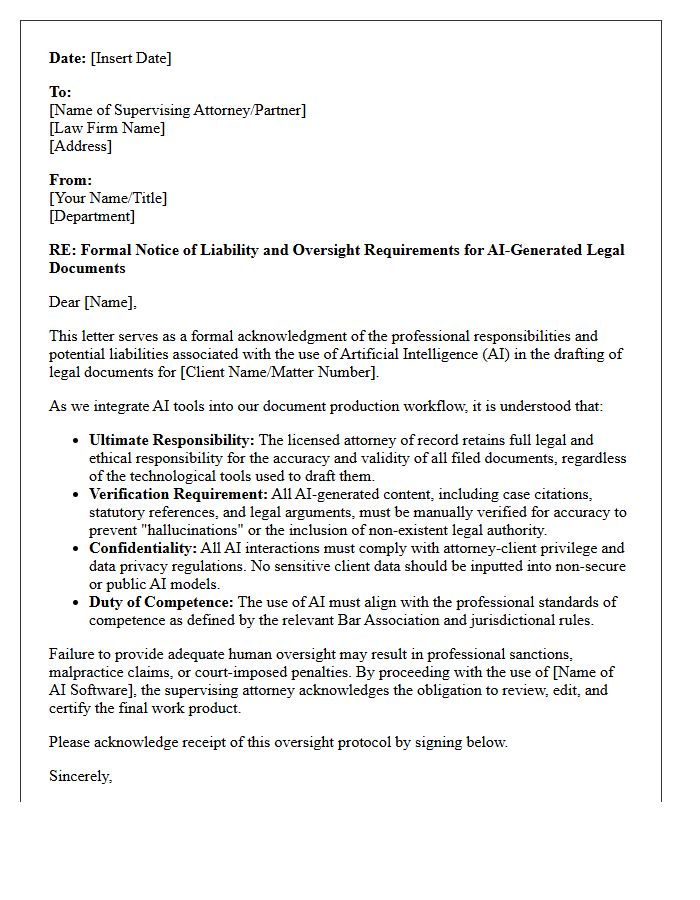

- Legal Document Drafting Artificial Intelligence Oversight Liability Letter

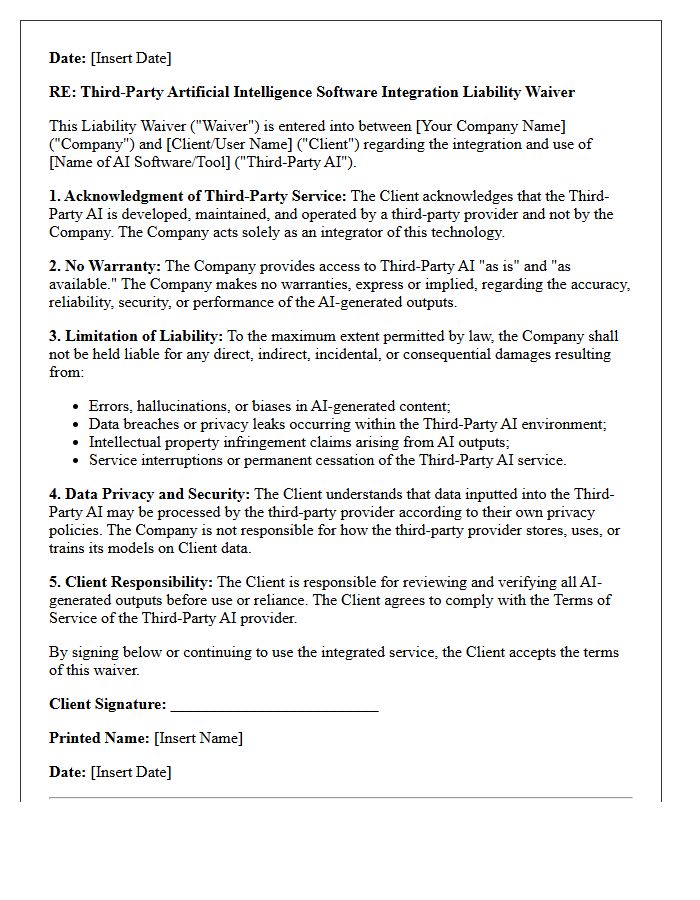

- Third-Party Artificial Intelligence Software Integration Liability Waiver Letter

- Law Firm Artificial Intelligence Bias and Discrimination Liability Caution Letter

- Partner Fiduciary Duty and Artificial Intelligence Implementation Liability Letter

Client Confidentiality and Artificial Intelligence Tool Liability Disclosure Letter

A Client Confidentiality and Artificial Intelligence Tool Liability Disclosure Letter is a vital legal document used to inform clients when AI technologies process their sensitive data. It explicitly outlines how information is handled, addresses potential security risks, and clarifies the liability limitations of the service provider. By signing, clients acknowledge the use of third-party algorithms, ensuring transparency and protecting professionals from legal claims arising from AI-generated errors or data breaches. This disclosure is essential for maintaining ethical standards and robust data privacy compliance in a modern digital workflow.

Law Firm Generative Artificial Intelligence Malpractice Liability Warning Letter

A law firm generative artificial intelligence malpractice liability warning letter serves as a critical formal notice regarding the ethical risks of using Large Language Models in legal practice. These notices highlight potential professional negligence stemming from AI-generated hallucinations, breached client confidentiality, and lack of human oversight. Firms must implement strict internal compliance protocols to mitigate malpractice liability. Failure to disclose AI usage or verify legal citations can lead to severe disciplinary actions, highlighting the necessity for transparency and rigorous quality control when integrating automated tools into legal workflows.

Legal Counsel Artificial Intelligence Risk and Liability Advisory Letter

A Legal Counsel Artificial Intelligence Risk and Liability Advisory Letter identifies enforceable frameworks for mitigating algorithmic bias and intellectual property infringement. This formal document outlines statutory compliance requirements under emerging regulations like the EU AI Act. It provides essential guidance on data privacy, vicarious liability, and transparency standards to protect organizations from litigation. By assessing contractual indemnification and operational risks, the advisory letter ensures that AI integration aligns with fiduciary duties while minimizing exposure to regulatory penalties and third-party claims in an evolving legal landscape.

Artificial Intelligence Vendor Contract Liability Indemnification Letter

An Artificial Intelligence Vendor Contract Liability Indemnification Letter is a critical document that defines how legal responsibility is allocated between parties. It outlines the vendor's obligation to protect the client against losses arising from intellectual property infringements, data breaches, or algorithmic errors. Understanding the scope of these indemnity clauses is essential for risk management. Clients must ensure the letter includes clear liability caps and specific protections against third-party claims resulting from AI-generated outputs, ensuring the provider remains accountable for the performance and compliance of their proprietary technology.

Legal Practice Automated Decision-Making Liability Assessment Letter

A Legal Practice Automated Decision-Making Liability Assessment Letter is a formal document used to evaluate legal accountability when AI systems produce biased or erroneous outcomes. It identifies potential breaches of duty, analyzing whether algorithmic negligence or lack of human oversight led to damages. This letter serves as a critical risk mitigation tool, outlining the specific liability framework and evidentiary requirements for litigation. By clearly defining the causation between automated logic and legal harm, it ensures that practitioners can address algorithmic transparency and uphold professional standards in digital decision-making environments.

Attorney Ethics and Artificial Intelligence Implementation Liability Advisory Letter

The Attorney Ethics and Artificial Intelligence Implementation Liability Advisory Letter serves as a critical regulatory framework for legal professionals. It emphasizes that while AI can enhance efficiency, attorneys maintain non-delegable responsibilities regarding client confidentiality, competence, and supervision. Lawyers must ensure technological proficiency to avoid malpractice and ethical breaches. The letter warns that ultimate liability for AI-generated errors remains with the human practitioner, necessitating rigorous verification of all automated outputs to uphold the integrity of the judicial process and protect client interests in an evolving digital landscape.

Law Firm Artificial Intelligence Data Breach Liability Notification Letter

A Law Firm Artificial Intelligence Data Breach Liability Notification Letter informs clients that legal technology has compromised sensitive information. Law firms must disclose specific vulnerabilities involving AI processors to comply with data privacy regulations. This document outlines the scope of exposure, remedial actions taken, and potential legal liabilities under attorney-client privilege. Clients should review these letters to understand restitution options and identity protection measures. Timely notification is essential to mitigate malpractice risks and maintain ethical transparency regarding the firm's reliance on automated systems and third-party data handlers.

Intellectual Property Infringement and Artificial Intelligence Usage Liability Letter

An Intellectual Property Infringement and Artificial Intelligence Usage Liability Letter serves as a formal notice regarding unauthorized use of copyrighted materials by AI systems. It establishes legal accountability for organizations utilizing generative tools that ingest protected data without consent. Receiving such a letter signifies potential copyright infringement and demands immediate remedial action. Understanding these documents is essential for navigating the complex intersection of AI training datasets and proprietary rights to mitigate financial risks and ensure strict compliance with evolving digital ownership laws.

Regulatory Compliance and Artificial Intelligence Implementation Liability Letter

A regulatory compliance and AI implementation liability letter serves as a formal legal instrument to outline accountability when deploying automated systems. It explicitly defines the responsibilities of developers and users regarding data privacy, algorithmic bias, and transparency standards. By documenting adherence to frameworks like the EU AI Act, organizations mitigate potential legal risks and financial penalties. This letter is essential for establishing clear liability boundaries, ensuring that all parties understand their obligations in maintaining ethical oversight and technical safety throughout the artificial intelligence lifecycle.

Legal Document Drafting Artificial Intelligence Oversight Liability Letter

When using Artificial Intelligence for legal document drafting, the primary attorney maintains ultimate professional liability for all outputs. An oversight liability letter serves as a formal framework to manage risks, ensuring human review of AI-generated content. It establishes clear accountability for errors, hallucinations, or data privacy breaches. Implementing such documentation is essential for ethical compliance and mitigating malpractice claims. This protective measure ensures that while technology increases efficiency, the lawyer's signature remains a guarantee of accuracy, legal validity, and adherence to jurisdictional standards within the evolving legal landscape.

Third-Party Artificial Intelligence Software Integration Liability Waiver Letter

A Third-Party Artificial Intelligence Software Integration Liability Waiver Letter is a legal document designed to allocate risk when incorporating external AI tools into business workflows. This waiver explicitly states that the service provider is not responsible for algorithmic biases, data inaccuracies, or security vulnerabilities inherent in third-party software. By signing, clients acknowledge the experimental nature of AI and waive their right to seek damages for automated errors. It is a critical instrument for liability protection, ensuring that companies are shielded from unforeseen technical failures or intellectual property complications arising from non-proprietary artificial intelligence systems.

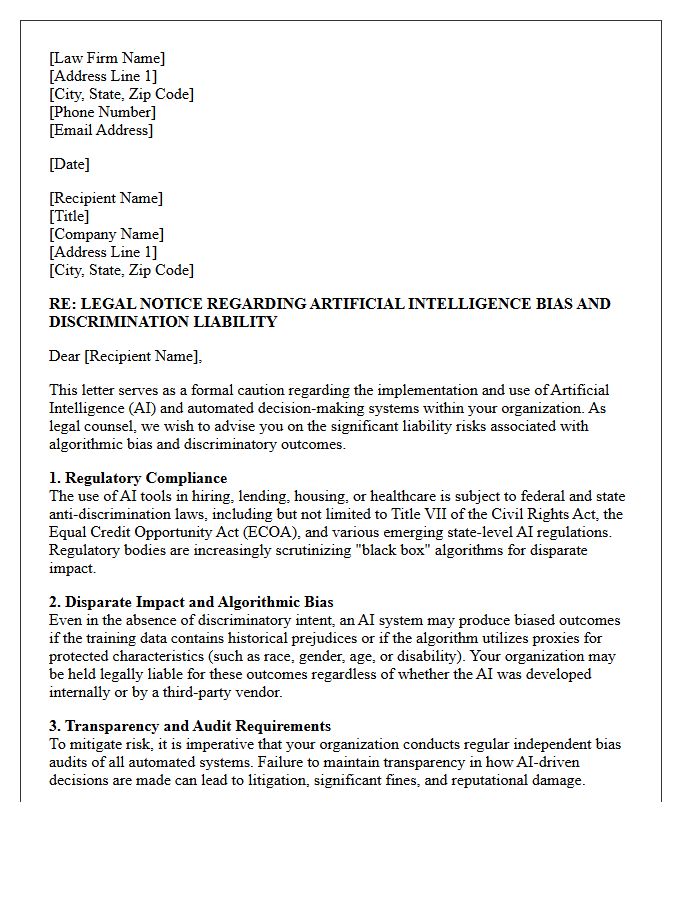

Law Firm Artificial Intelligence Bias and Discrimination Liability Caution Letter

A law firm caution letter regarding AI bias and discrimination liability warns clients that automated systems can inherit human prejudices. Firms must ensure algorithmic transparency to mitigate legal risks under civil rights laws. This document emphasizes that legal responsibility remains with the human operator, regardless of software errors. Proactive compliance audits and rigorous data testing are essential to prevent disparate impact. Understanding these liability frameworks is crucial for protecting organizations from litigation arising from discriminatory artificial intelligence outputs or flawed decision-making processes.

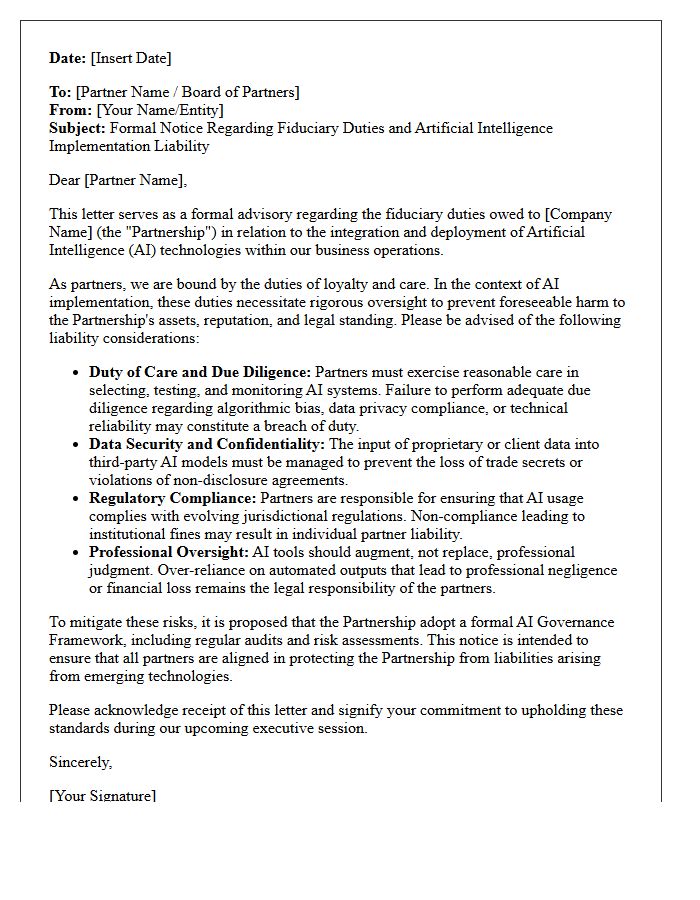

Partner Fiduciary Duty and Artificial Intelligence Implementation Liability Letter

A Partner Fiduciary Duty and AI Implementation Liability Letter is a critical document addressing the legal obligations of partners when deploying automated systems. It serves to clarify that fiduciary responsibility remains personal, even when delegating decisions to algorithms. This letter outlines specific liability frameworks, ensuring partners exercise due diligence and oversight to prevent breaches of loyalty or care. By formally documenting these standards, firms mitigate risks associated with algorithmic bias or financial loss, protecting the partnership from litigation arising from artificial intelligence mismanagement or lack of professional supervision.

What is the purpose of an Artificial Intelligence Implementation Liability Advisory Letter?

An Artificial Intelligence Implementation Liability Advisory Letter serves as a formal legal document that outlines the potential risks, ethical considerations, and compliance requirements associated with deploying AI technologies. It aims to define the boundaries of professional responsibility and mitigate legal exposure for organizations and stakeholders involved in the implementation process.

Who are the primary parties involved in an AI Liability Advisory Letter?

The primary parties typically include the technology provider or consultant issuing the advice and the client organization receiving it. The letter may also address third-party stakeholders, such as end-users or regulatory bodies, to clarify how liability is partitioned between the developer of the AI model and the entity responsible for its operational deployment.

What specific risks does an AI Liability Advisory Letter address?

The letter addresses critical risks including algorithmic bias, data privacy violations under frameworks like GDPR or CCPA, intellectual property infringement, and "hallucinations" or errors in output. It also provides guidance on administrative liability concerning autonomous decision-making and the lack of transparency in "black box" AI systems.

How does this advisory letter help in achieving regulatory compliance?

The letter identifies applicable local and international regulations, such as the EU AI Act or industry-specific guidelines. By documenting the recommended safeguards and due diligence steps, the advisory letter provides a defensive audit trail that demonstrates a "duty of care" and proactive adherence to evolving legal standards.

Can an AI Liability Advisory Letter eliminate all legal risks?

No, an advisory letter cannot eliminate all risks, as AI technology and its governing laws are constantly evolving. However, it serves as a vital risk-management tool that helps organizations understand their exposure, implement mitigation strategies, and establish a formal record of informed consent regarding the inherent uncertainties of artificial intelligence.

Comments